Agent Mapping

Identifying and categorizing the bot agents currently crawling your domain.

Precisely control user-agent behavior to maximize crawl efficiency

and protect high-value directories from search engine waste.

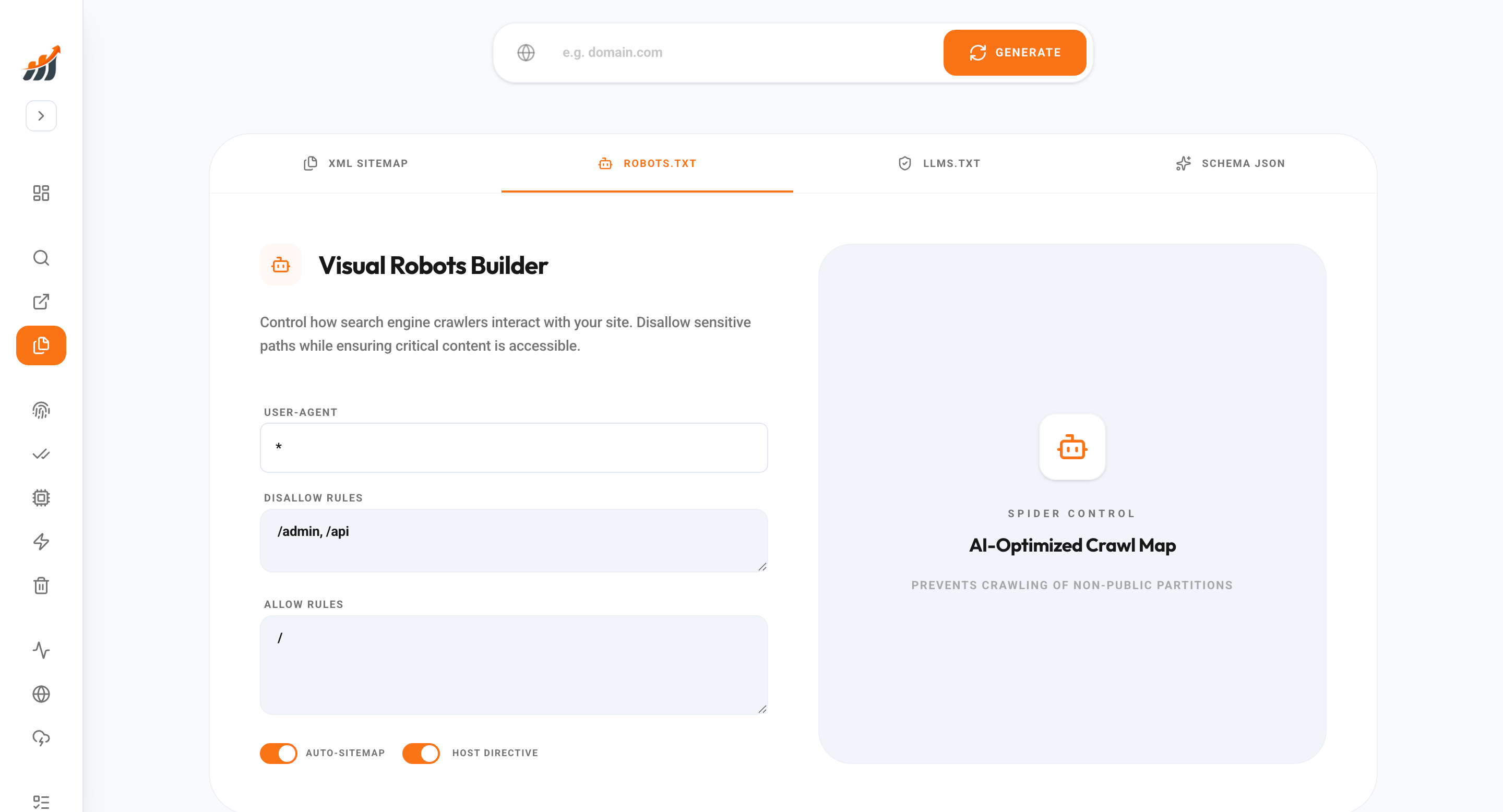

Your robots.txt file is the first protocol a search bot reads when visiting your domain. If misconfigured, search engines can waste energy crawling duplicate filters, private directories, or low-value scripts instead of your core content. Our Robots Directive Architect allows you to define granular Allow/Disallow rules across 30+ specific user-agents (Googlebot, Bingbot, Baiduspider, etc.). We scan your site structure and automatically suggest exclusion paths for common technical debt areas like /cgi-bin/, /temp/, and dynamic faceted navigation. This ensures your high-value indexable content is prioritized, directly impacting your ranking velocity and domain health scores.

Bot management is non-negotiable for modern SEO. SEO Matrix ensures your crawl directives are perfectly optimized for search performance.

Our engine maps your physical and virtual directory structure and identifies areas that contain non-indexable content. We then use pre-built templates for different CMS architectures (WordPress, Shopify, Next.js) to generate a robust robots.txt file. We also include a real-time 'Validator' that checks your generated rules against search engine standards to prevent accidental de-indexation of your home-page or key landing pages. Before you go live, our system performs a 'Bot Simulation' to let you know exactly what your directives will hide from public search indexers.

Take control of your crawl efficiency with automated bot management. We provide the tools to ensure bots spend their time wisely on your domain.